There are two stories about AI and performance management going around right now. One says AI will replace managers. The other says AI is just a faster autocomplete for the forms HR was already making people fill out. Both are wrong.

The accurate story is that AI handles, for the first time at scale, two parts of performance management that managers were never going to do well on their own: capture in flow and synthesis at scale. It doesn't write the conversation. It doesn't make the development decision. It doesn't replace the manager. What it does is move the floor — the worst-case version of how a manager runs a 1:1 or writes a summary now starts from a much better baseline than it ever did before, and the best-case version is reachable by people who couldn't get there without help.

This post is about what AI actually changes in performance management, what it doesn't, and where the line has to sit.

Why managers historically avoided feedback

Most managers don't avoid feedback because they don't care. They avoid it because the workflow asks them to do something that's hard at scale: remember twelve months of micro-observations across five to fifteen direct reports, sort the noise from the signal, deliver each piece in a calibrated tone at the right moment, and write a coherent summary of all of it twice a year.

In practice, every part of that ask gets compromised. Observations don't get captured because the manager is in flow on something else. Sorting doesn't happen because there's no time set aside for it. Delivery gets delayed — the failure mode we covered in detail — until the conversation is six weeks late and ten times harder. The summary at year-end is built from memory, which means it's mostly the last six weeks plus whatever events were unusual enough to remember.

This isn't a manager-quality problem. It's a workload problem. The expected level of care, multiplied by the number of reports, multiplied by the number of weeks in a quarter, is more cognitive load than any human can sustainably carry. The system has been forcing managers to choose between doing the job badly and skipping parts of it.

Most chose to skip parts of it. Annual reviews, calibration meetings, structured 360s — these are all elaborate workarounds for what was always a workload problem.

The administrative burden problem

Set the cognitive load aside and the math still doesn't work.

A manager with eight direct reports running weekly 1:1s plus quarterly summaries is committed to roughly 432 structured conversations a year — fifty 1:1s per person plus four quarterly summaries each. Each conversation requires preparation, the meeting itself, and follow-up. The minimum reasonable per-conversation overhead is thirty to forty-five minutes when done well.

Multiply out: somewhere between 250 and 300 hours a year on conversations alone — six to seven full weeks of work, before any of the other parts of the job. Add quarterly summary writing, mid-cycle adjustments, annual narratives for HR, and calibration prep, and the total swells past 350 hours.

This is the administrative burden that sits underneath every "managers should give more feedback" exhortation. The exhortation isn't wrong; the math just doesn't support it without help. The friction story we told in the previous post is the moment-to-moment version of the same problem. What changes the math isn't motivation. It's leverage.

What AI actually changes — capture, synthesis, and coaching

AI changes the math in two specific places. Both are operational, neither is magical.

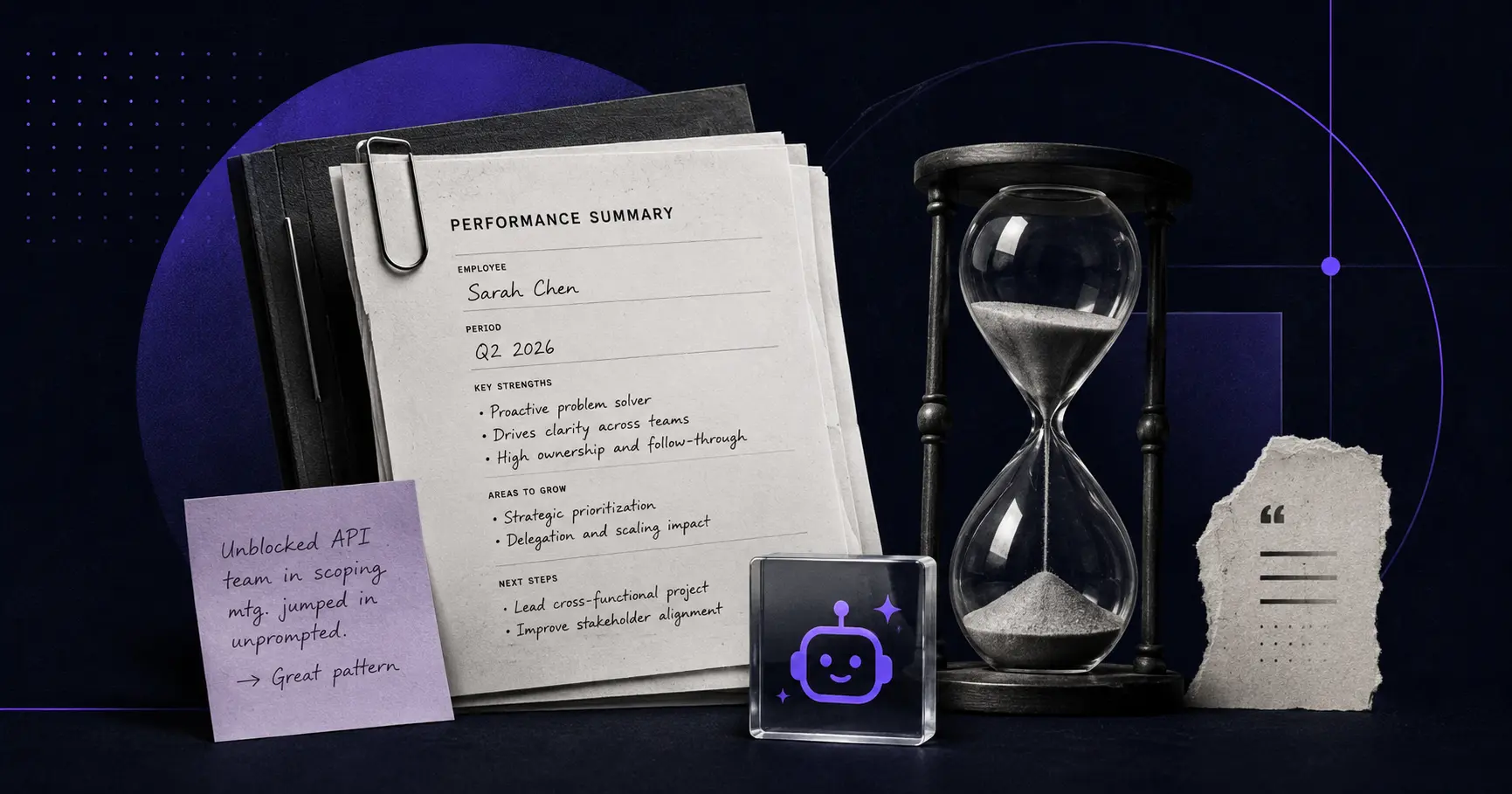

Capture and synthesis. A manager has dozens of small observations a week — too small to write up properly, too useful to lose. Without help, most of them disappear into mental notes that decay within hours. With AI in the loop, the manager can describe the moment in two sentences ("Sarah unblocked the API team this morning by jumping into their scoping meeting unprompted; she's been making more of those calls in the last few weeks"), and the system structures it into a proper observation, attaches it to the right person, files it where it belongs. The unit cost of capture drops from "a deliberate writing task" to "a thought spoken once." Volume goes up. Quality goes up.

At quarter close, the system synthesizes the captured observations into a draft summary. The manager reviews, edits, signs. The unit cost of writing drops from "four hours of remembering and drafting" to "thirty minutes of editing." The summary is grounded in what actually happened across the quarter, not reconstructed from memory.

Coaching prompts. The other thing AI does well is recognize the coaching moves a manager could be making and isn't. A manager about to walk into a difficult 1:1 can get a structured agenda — opening question, observation framing, recommended question to ask — that turns the meeting from improvised into prepared. A manager defaulting to "fix it for them" can get a prompt back asking whether they wanted to hand the report a question instead. The coaching gap that most managers walk into untrained gets partially filled by an agent that's seen ten thousand 1:1s and can recognize what works.

Performance Blocks runs both of these surfaces through Henry, our coaching agent. Henry drafts observations from quick descriptions, synthesizes summaries from the underlying data, walks managers through structured 1:1s, and prompts coaching questions in the moment. None of it replaces the conversation. All of it changes what arrives at the conversation. Annual reviews can finally die because the workflow that was supposed to replace them is finally cheap enough to actually run.

What AI uniquely enables — pattern recognition across teams

There's a third thing AI does that humans literally can't do, no matter how disciplined: see patterns across teams.

A senior leader running a 50-person engineering organization has, in principle, oversight of 50 careers. In practice, they have direct visibility into the 5–7 people who report to them and inferred visibility into the 40+ who don't. Most of what they "know" about those people is what their managers told them in calibration meetings — once a year, filtered through each manager's framing.

AI in performance management can sit across all the data and surface things no individual manager would notice. Specific patterns it does well:

Inconsistent feedback density across teams. Manager A captures three observations per report per quarter. Manager B captures fifteen. Either Manager B is over-monitoring or Manager A is under-noticing. Either has consequences. The org-level signal is invisible until something can see it.

Calibration drift. Two managers describing similar performance with different language ("reliable" versus "low-ceiling") produce divergent compensation outcomes for similar work. AI can flag the patterns where word choice and rating diverge across managers, surfacing the drift before it hardens into pay inequity.

Underrecognized contributions. A senior engineer mentioned twice as a critical collaborator in other people's observations, but never in her own manager's, is doing valuable work nobody is crediting. The cross-reference is hard for any single manager to do; trivial for a system that reads the file across the team.

Early signal on attrition risk. Patterns of changed cadence, missed 1:1s, and shifting tone in observations can flag attrition risk weeks before resignation conversations. Not as a replacement for the human signal, but as a check on it.

None of this replaces the manager's judgment. All of it gives the manager information they couldn't have gotten any other way. The org-level view becomes available because there's now a thing that can read across thousands of observations at once and find the patterns that don't fit the model.

Where the line has to sit — ethics and transparency

AI in performance management raises real concerns. The thoughtful version of those concerns deserves a real answer.

Transparency about what's captured. Anyone whose performance is being observed by an AI deserves to know the system is in use, what it captures, and how the data feeds into decisions about them. Workflow-native systems have an advantage here: when a manager invokes a capture command in Slack, the report can see the action and (in well-designed systems) the resulting observation. The capture is visible, not surveillant. Hidden monitoring isn't part of any defensible model.

Bias amplification. Models trained on historical performance data can encode the same biases the historical data contained. A model that's been told "high performers communicate more often in writing" will rate verbal communicators lower. The mitigation is both technical (audit the model's outputs against demographic slices) and structural (use AI to surface observations and structure summaries, not to assign ratings or make compensation decisions). The further AI sits from the actual judgment, the less harm a biased model can do.

Replacement of the conversation. This is the worry that gets the most airtime and is the easiest to address: AI doesn't replace the conversation between a manager and a direct report. It changes what arrives at the conversation. A manager who tries to use Henry — or any AI system — to skip the conversation is misusing it, and the failure mode is recognizable from the outside. The check is to keep human signoff on every consequential decision and to keep the AI inside the prep-and-synthesis layer, not the deliberation layer.

Data governance. Performance data is sensitive. Where it's stored, who has access, how it's encrypted, what model providers see it — these are real questions, and they should be answered explicitly by any vendor selling AI-assisted performance management. We're explicit about ours; any tool worth using will be similarly explicit about theirs.

The right framing isn't "AI in performance management is risky." It is. So is performance management without AI. The question is which tradeoffs are acceptable, and the answers depend on getting the design right — transparency by default, AI in support of the manager rather than substitute for them, human signoff on consequence.

Frequently asked questions

How is AI changing performance management?

AI changes two specific things: it lowers the cost of capturing observations as work happens (from "a deliberate writing task" to "a thought spoken once") and it synthesizes summaries from the captured observations rather than from memory. It also enables pattern recognition across teams — flagging calibration drift, underrecognized contributions, and feedback density patterns no single manager could see alone. None of this replaces the manager; it handles the workload that always made performance management impossible to do well at scale.

Can AI replace performance reviews?

No. AI replaces the parts of the review workflow that were always administrative — capturing observations, sorting them, drafting summaries, prompting structured questions. It doesn't replace the conversation between a manager and a direct report, the development decision a manager makes about a person, or the rating decision an org makes at calibration. The conversation is irreducibly human; AI changes what arrives at the conversation, not what happens inside it.

What can AI do that managers can't in performance management?

AI can read across the entire file of every observation, summary, and 1:1 across an organization — something no individual manager has the time or visibility to do. That makes patterns visible at the org level that aren't visible at the team level: calibration drift between managers, underrecognized contributors, density variations in feedback, early-signal patterns on attrition risk. None of this is judgment; it's signal aggregation that humans can't physically do at scale.

Is AI in performance management ethical?

It can be. The conditions are: transparency about what's captured and how it feeds decisions, audits against demographic bias, AI kept in the support layer (capture, synthesis, prompting) rather than the judgment layer (rating, compensation), and human signoff on every consequential decision. Hidden monitoring, AI-driven ratings, and unaudited models aren't defensible. The same AI applied differently is either a workload tool or a surveillance system; the design decisions are what determine which.

How does AI help managers without replacing them?

AI handles the parts of performance management that managers were never going to do well on their own — capturing in flow, synthesizing across volume, prompting structured next moves. The manager still has the conversation, makes the development call, and signs off on every decision. AI moves the floor: the worst-case version of how a manager runs a 1:1 or writes a summary starts from a much better baseline than it did before. The best-case version becomes reachable by managers who couldn't get there without help.

What we know — and what we're refining

If you manage people and you've been waiting to see whether AI in performance management is real, the move this week is small: pick one of the three things AI does well — capture, synthesis, or coaching prompts — and try one once. Capture an observation by talking to your phone instead of writing it. Have a draft 1:1 agenda generated and edit it. Try a coaching prompt before a difficult conversation. You'll see in one cycle whether it changes the rate at which you do the work performance management always required.

AI in performance management isn't going to replace managers, and it's not just a faster autocomplete for the forms HR was already making people fill out. It changes the operational layer — capture, synthesis, pattern recognition — without changing the human layer underneath. We've built Performance Blocks around that distinction. Henry handles the workload that was always the bottleneck on doing the job well; the manager still has the conversation, makes the development call, and decides what comes next. The line between support and replacement isn't blurry; it's load-bearing. We've designed the product to keep the line clear, and we think every defensible AI system in this category will end up doing the same.

The detail we're still refining is which AI moves help most at which stage of a manager's experience. The patterns we see suggest newer managers benefit most from coaching prompts and structured agendas (the moves they would not otherwise know to make), while experienced managers get the most leverage from capture and synthesis (what they could already do, just faster). The right mix shifts as the manager grows into the work. If you've watched a manager's relationship to AI tooling change over a year or two, we'd genuinely like to hear what shifted.

AI is changing performance management forever — but not in the directions either of the dominant narratives predicted. It's not replacing managers. It's not a thin wrapper on the same broken forms. It's making the work of being a good manager finally tractable for the average manager, not just the exceptional one. That's the part that's actually new.